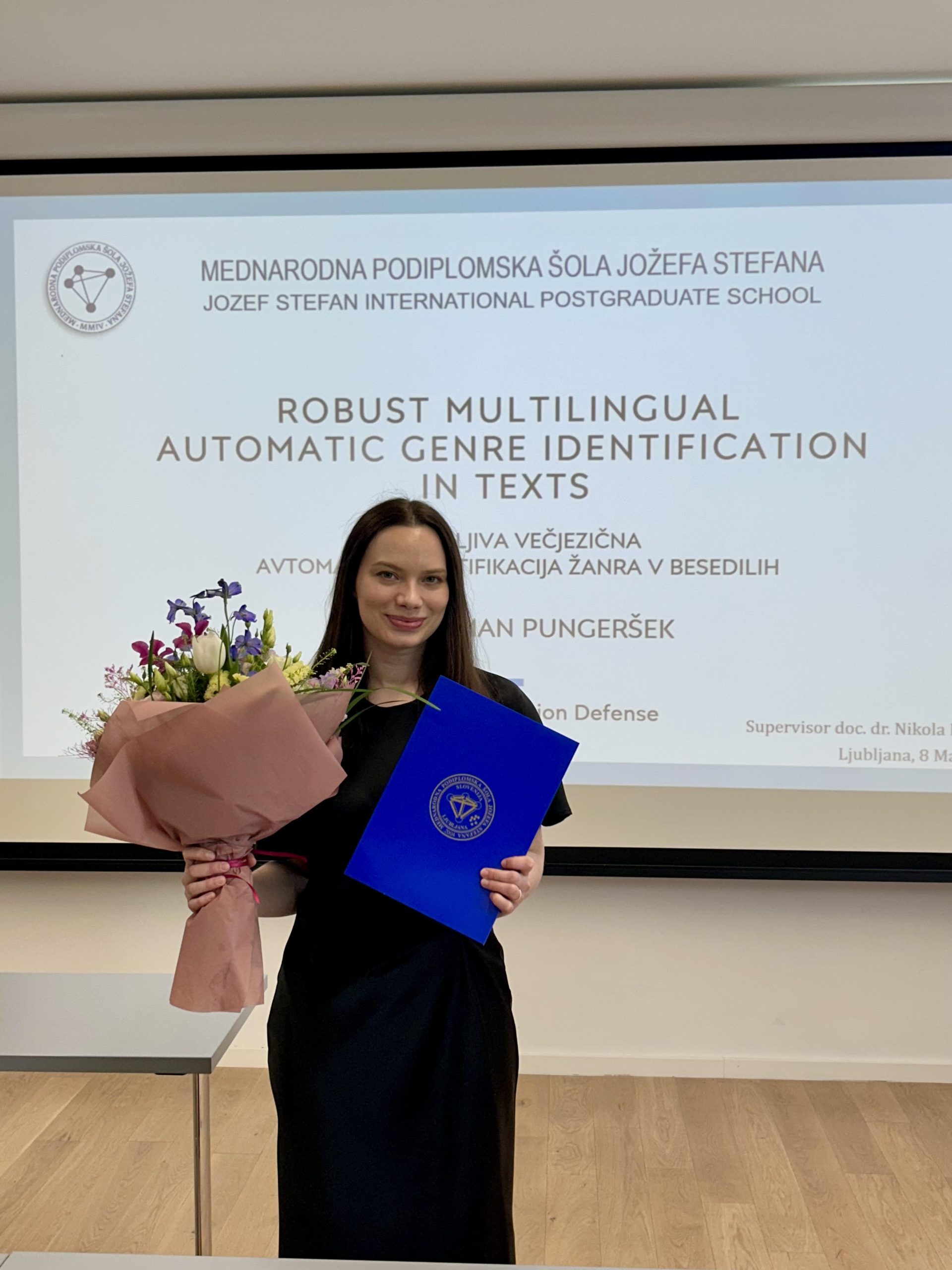

Taja Kuzman Pungeršek successfully defended her doctorate thesis titled Robust Multilingual Automatic Genre Identification in Texts.

Congratulations!

Abstract:

Collecting texts from the web has significantly accelerated the creation of large text datasets

which are essential for the development of advanced language technologies, including large

language models. However, because these texts are gathered automatically, their linguistic

and functional characteristics are largely unknown, limiting their reliable use in research

and applications. Automatic genre identification, a text classification task that categorizes

texts into specific genre categories, provides key insights into such large-scale text

collections and enables their filtering for applications in language technology and linguistic

research.

This thesis advances automatic genre identification for web-scale multilingual text data

through the creation of novel genre schemata, the development of manually-annotated

genre datasets, and the exploration of robust machine learning methods. First, the

study introduces new genre schemata designed to improve annotation reliability and

cross-schema comparability, thereby addressing a key limitation of prior work where

incompatible schemata hindered meaningful comparison across studies. We demonstrate

that high-quality manual annotation with acceptable inter-annotator agreement can

be achieved through a carefully designed genre schema, detailed guidelines, and the

employment of expert annotators.

To address the lack of high-coverage genre datasets in our target languages, we develop

high-quality, manually-annotated genre datasets in Slovenian and English, as well as

multilingual test collections spanning eleven typologically diverse languages and different

scripts. Building on the test datasets, we establish the AGILE benchmark, which enables

standardized, reproducible cross-dataset and cross-lingual evaluation of genre classifiers.

The benchmark also supports a systematic comparison of modern large language models

across languages.

Using this infrastructure, we develop genre classifiers that achieve robust performance

across various languages, datasets, and evaluation settings. To ascertain which machine

learning methodology offers the most robust generalization, we experiment with a range

of text classification techniques, ranging from traditional non-neural machine learning

methodologies to cutting-edge approaches based on large language models. Our findings

indicate that a BERT-like model, fine-tuned on our newly developed training dataset,

achieves state-of-the-art performance across various datasets and languages, including languages

using non-Latin scripts and languages not closely related to the fine-tuning languages.

The resulting model is publicly released, providing a practical tool for large-scale

automatic genre annotation of multilingual web text collections.

Furthermore, we propose the LLM Teacher-Student Framework, a novel approach for

training text classifiers without manually-annotated data. By leveraging a large language

model to generate training labels, this method enables scalable, cost-efficient development

of genre classifiers, particularly for low-resource languages and specialized domains, and is

applicable beyond genre identification to other text classification tasks.